If you're looking to unblock Facebook or messenger at school, in work, or in a country like Russia, then a VPN can help! By using a VPN for Facebook, you can get unrestricted access to your favorite social media sites on any Wi-Fi network – even if it's blocked by your network admin or government. It also lets you unblock geo-restricted content and other services.

In this guide, we list the best VPNs for Facebook and give you some top tips on how to unblock it.

What are the best VPNs to Unblock Facebook & Messenger?

We have listed the best VPNs for unblocking Facebook at school or work below. Scroll below this list for a closer look at our recommended services.

- ExpressVPN - The best VPN for Facebook. It's a secure service with great privacy features, lightning-fast servers, and a 30-day money-back guarantee.

- NordVPN - The best mid-range Facebook VPN. You get fast connections, sleek apps for all OS, and premium privacy features – affordably!

- Surfshark - The best value-for-money Facebook VPN. It works seamlessly with Facebook, it's super fast, and offers unlimited connections.

- Private Internet Access - The most secure Facebook VPN. Unblocks social platforms, with advanced privacy features and a strict zero-logs policy.

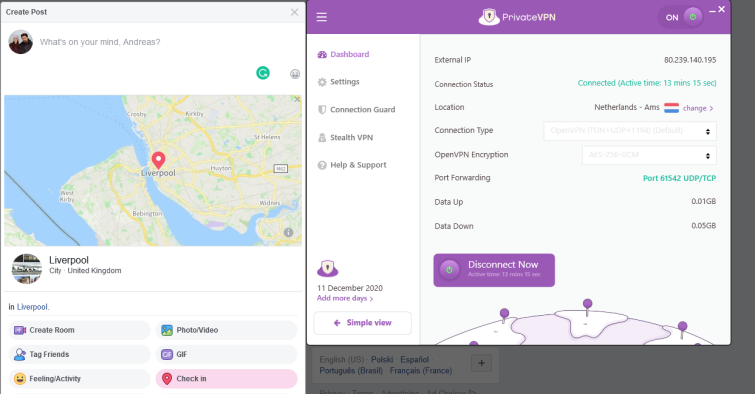

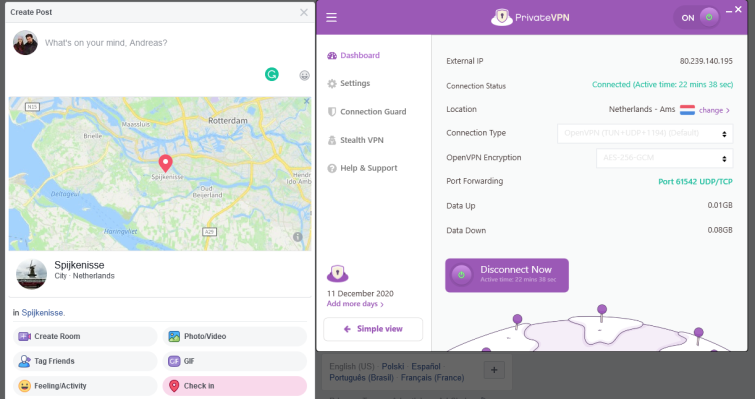

- PrivateVPN - The best Facebook VPN for beginners. With easy-to-use apps for all platforms, smooth performance, and cheap plans.

EXPERIENCE EXPRESSVPN WITH A 30-DAY FREE TRIAL

Sign up on this page to enjoy a full-featured 30-day trial from ExpressVPN. Dive into the world of the #1 rated VPN for privacy, and especially if you're keen on trying the best VPN for Facebook & Messenger

Transparent terms—contact the support team within 30 days for a hassle-free refund if you're not satisfied. Click here to start your ExpressVPN trial.

All the VPNs above will reliably unblock Facebook on restricted Wi-Fi networks, and before we include them in our list we make sure they have each of the following:

- Fast servers located around the world

- The ability to unblock Facebook and other sites

- Great online privacy and security features

- Apps for all major platforms

These services also have their own unique features. We give you more information about each VPN below, so pick the one which best fits your personal needs.

| TEST WINNER ExpressVPN | NordVPN | Surfshark | Private Internet Access | PrivateVPN | |

|---|---|---|---|---|---|

| Website | ExpressVPN | NordVPN | Surfshark | Private Internet Access | PrivateVPN |

| Ranking for Facebook and Messenger | 1 | 2 | 3 | 4 | 5 |

| Performance | 10 | 9 | 9 | 8 | 7 |

| Reliability | 9 | 8 | 8 | 8 | 7 |

| Free trial | |||||

| Total servers | 3000 | 6200 | 3200 | 3386 | 200 |

| Payment | PayPal Visa/MasterCard Amex Cryptocurrency | PayPal Visa/MasterCard Amex Cryptocurrency | PayPal Visa/MasterCard Amex Cryptocurrency | PayPal Visa/MasterCard Amex Cryptocurrency | PayPal Visa/MasterCard Amex Cryptocurrency |

| Unblocks: | Netflix iPlayer Disney+ Amazon Prime Hulu | Netflix iPlayer Disney+ Amazon Prime Hulu | Netflix iPlayer Disney+ Amazon Prime Hulu | Netflix iPlayer Disney+ Amazon Prime Hulu | Netflix iPlayer Disney+ Amazon Prime Hulu |

| Supported platforms | Windows macOS iOS Android | Windows macOS iOS Android | Windows macOS iOS Android | Windows macOS iOS Android | Windows macOS iOS Android |

The Best VPNs for Facebook & Messenger | In-depth analysis

We take a more in-depth look at our recommended Facebook VPN services here. If you still want more information about these services, check out our detailed VPN reviews.

ExpressVPN is the best VPN for Facebook. It's a reliable and secure service that unblocks plenty thanks to its fast servers located worldwide. Express offers a 30-day money-back guarantee. ExpressVPN Demo ExpressVPN is my top pick for unblocking Facebook! Its global network of robust and lightning-fast servers gives users plenty of choice when it comes to spoofing their location – and its apps look great, are easy to use, and compatible with pretty much any device. Add in top-notch encryption and security features, and you've got a premium service that's well worth the pricey subscription fee. ExpressVPN makes it incredibly easy to unblock Facebook, whether you're at home, at school, or at work – and that's thanks to the VPN's huge pool of servers in 105 countries

countries across the globe. You can browse through ExpressVPN's server locations in a handy list, take your pick and connect in a few taps, or let the provider do the work for you by hitting the Smart Location option. This handy feature uses an algorithm to determine the best server for you, based on download speed and distance, and is especially useful for mobile users looking to unblock Facebook with a minimum of fuss. I'm constantly recommending ExpressVPN to folks looking to unblock the world's most in-demand streaming services, too! The VPN can provide reliable access to sites like Netflix, BBC iPlayer, Amazon Prime, and Hulu, as well as local sites that might be blocked when traveling abroad. What's more, ExpressVPN is seriously quick, so you don't need to worry about that dreaded buffering circle interrupting your movie marathon. When you're trying to access Facebook in order to stay in touch with family and friends, the last thing you'll want to be worried about is whether your ISP (or even your government) is keeping tabs on your every digital move. ExpressVPN puts a stop to any snooping, however, with strong OpenVPN encryption – as well as a selection of additional protocols to choose from. Lightway, ExpressVPN's very own proprietary protocol based on WireGuard, is especially speedy, and could be a match made in heaven for avid streamers and gamers. I also like that the provider's kill-switch is enabled by default and will step in to prevent your original IP address from leaking in the event of a VPN dropout. ExpressVPN also gets full marks for its zero-logs policy. The service just isn't interested in keeping records of your connection logs or browsing history, and even invited PwC, auditing titans, to comb through its code – and that's just what I like to see from the industry's leading names. Regardless of whether you're a VPN newbie or a veteran of the tech, you'll have no trouble installing ExpressVPN on your device. There are fresh, modern-feeling apps available for all platforms, and they're nice and easy to use – no clutter, no cramped UI. I'd like to see ExpressVPN offer more than just 8

simultaneous connections someday, however. There are more budget-friendly providers offering more connections for a fraction of the price, although 8

connections should still be enough to ensure you can access Facebook from anywhere, anytime. ExpressVPN really is an outstanding service that's backed up by a five-star 24/7 customer support team and knowledge base. But if that's still not enough, it offers a 30-day money-back guarantee so you can put it to the test in your own time. NordVPN is a popular VPN for unblocking Facebook and Messenger worldwide. It offers fast servers, apps for all platforms, lots of customization, and a 30-day money-back guarantee. Nord Demo NordVPN comes with all the tools you need to unblock Facebook – minus a hefty price tag! There are thousands of servers dotted across the globe, reliably snappy speeds, and easy-to-use apps you can take with you on the go. A fantastic all-rounder with a well-earned reputation. NordVPN has a seriously impressive network, boasting thousands of servers in 111 countries

countries, so you'll have no trouble spoofing your location if you need to avoid geo-restrictions and blocks. I like that the NordVPN apps feature a map – this makes it much easier to visualize the distance between your current location and the server you'd like to connect to, so you can gauge the potential impact to your speeds and ping. Similar to ExpressVPN, NordVPN has a Quick Connect button that pairs users with a server that's been chosen for them by an algorithm – so if you want to unblock Facebook in a matter of seconds, and don't want to waste time figuring out which server to select, then it's well worth using. At ProPrivacy, we're always monitoring VPN speeds, and NordVPN performs consistently well – often claiming a top-5 spot in our speed chart! As a result, it's a great pick for anyone who wants to stream in HD (and unblock highly sought after services like Netflix and BBC iPlayer), hop into online games, or join VoIP calls. NordVPN doesn't pull punches when it comes to safeguarding its users' privacy. With strong OpenVPN encryption, you can be sure no nosey third parties are monitoring your activity across the web – and that's vitally important for folks living in countries where Facebook has been banned. In addition to OpenVPN, you'll also be able to check out the IKEv2 and NordLynx protocols, so you have some alternatives if you'd like to tweak your settings to maximize performance. I like that NordVPN also has its very own ad-blocker, so annoying pop ups and malicious trackers won't be a problem, and that its Double VPN feature routes your traffic through two VPN servers for an additional layer of protection. Like all of the industry's best services, NordVPN is a zero-logs provider that does not keep records of identifiable user information, like IP addresses and traffic data. Plus, the VPN has invited PwC to validate its logging claims. It's also great to see that NordVPN is based in Panama, taking it beyond the reach of the invasive US and EU jurisdictions. All in all, users can browse the web without worrying where their data might end up. NordVPN's apps are some of my favorites! I like the clean, minimalist style and intuitive interface, and have never had any issues customizing my settings or switching protocols. Honestly, I think NordVPN is a great introductory service for newcomers to the tech – particularly as it only takes a few clicks to get the app set up on your desktop, laptop, or mobile device! Currently, NordVPN offers 10

simultaneous connections, which is in line with the industry average, but could be improved to ensure that users can always cover each and every gadget in the home. With 24/7 customer support, via live chat or email, you'll be able to troubleshoot any issues that crop up, and a 30-day money-back guarantee makes it possible to take NordVPN for a trial run without risking a single dime. I'd definitely recommend playing with the provider's unblocking power and privacy features for yourself. Surfshark is a great value-for-money VPN for Facebook. It's packed with features – like obfuscated servers – and a 30-day money-back guarantee. Surfshark Demo Surfshark is a feature-rich service that's impressively cheap considering everything that's packed into your subscription. It's easy to unblock Facebook with Surfshark, thanks to a global network of super-fast servers, and users will also have access to all sorts of international content libraries and streaming platforms. Few VPNs can go toe-to-toe with Surfshark when it comes to server networks – the provider has thousands of speedy servers dotted across 100 countries

countries! This makes it easy to bypass frustrating blocks and bans imposed by either your workplace, ISP, or even a restrictive government, and if you're in a rush to unblock Facebook, simply hit the "Fastest Server" option to be paired up with a lightning-fast server in a click. Surfshark is a top pick for avid streamers, too, thanks to its unlimited bandwidth and lack of data caps. As a result, you can stream as many movies, box sets, and TV shows as you'd like without worrying about buffering hiccups. The VPN can unblock the likes of Netflix, BBC iPlayer, Amazon Prime, and Hulu, to name a few, and also provides reliable access to all social media platforms – so you can check in with all your accounts at the same time. You won't need to worry about leaving an identifiable trail across the web, or about your data being exploited by a snooper or cybercriminal, thanks to Surfshark's OpenVPN encryption. I'd recommend sticking with the tried-and-tested OpenVPN protocol whenever you can, but if you want to mix things up and check out a speedier option, then try the IKEv2 or WireGuard alternatives! Surfshark also has a Camoflauge Mode obfuscation (stealth) feature that can disguise the fact you're using a VPN – and that's hugely useful if you live or work somewhere where VPNs are banned. I also like Surfshark's kill-switch, though you'll have to enable it yourself, and its ad-blocker, which banishes ads and trackers from your browsing sessions. In addition, it's great to see that Surfshark has invested in a public security audit of its zero-logs policy – and that it worked with renowned testers, Cure53, to do so. I hope Surfshark ultimately commits to regular audits of its infrastructure and policy to prove its commitment to user privacy. Being based in the British Virgin Islands is another big win, however, as there are no mandatory data retention laws to be worried about! Surfshark separates itself from the rest of the VPN herd by offering unlimited simultaneous connections – and that's hugely generous, as well as real value-for-money! With a single subscription, you'll be able to cover all of your devices as well as those belonging to your family and friends, ensuring that they can unblock Facebook, too! And, because Surfshark has fresh-feeling apps for all platforms, you'll be able to stay secure regardless of whether you're surfing the web on your desktop PC or relying on public Wi-Fi hotspots with your mobile. If you need to troubleshoot issues or want advice figuring out which server suits your needs, reach out to the Surfshark 24/7 customer service team via email and live chat. And be sure to try the VPN for yourself, on your own devices, by making good use of its 30-day money-back guarantee! And if you'd rather not part with a penny, there's a 7-day free trial available for Android, iOS, and Mac users – just remember to cancel it before you're charged at the end of the week! Private Internet Access is a very secure Facebook VPN. It offers a proven zero logging policy, advanced privacy features for unblocking Facebook, and 30-day money-back guarantee. PIA Demo Private Internet Access (PIA) is a US-based provider with a proven zero-logs policy and huge fleet of servers, making it ideal for privacy-conscious individuals who want to unblock Facebook without any third parties peeking over their shoulder. PIA has long been admired by the privacy community for its well-implemented encryption and features, and is also one of the cheapest services on our list – a total win/win! Currently, PIA has tens of thousands of servers in 84 countries

countries – an impressive feat! Users will be able to hop from location to location using the streamlined PIA app and enjoy connections with unlimited bandwidth and no data limits. So, PIA is a great option if you regularly like to browse video content on Facebook. Because the majority of PIA's servers are based in the United States, it's particularly good at unblocking US content that might otherwise be unavailable to folks living overseas. Netflix US, HBO, Peacock, and more – PIA is a must-have tool for fans of US series and movies. It's also worth noting that PIA is a lightning-fast provider that won't disappoint when it comes to speed. I don't experience slow-loading pages or poor quality video when connected to a server, and it's even possible to game online with friends or enjoy smooth video calls with co-workers or family members. Few providers prioritize security like PIA, and that's exactly why it's claimed a top spot in my Facebook recommendations. Unfortunately, the web is riddled with opportunistic cybercriminals and invasive ISPs and agencies, all vying for your details, but PIA's encryption prevents this snooping with OpenVPN encryption. Of course, you're welcome to try the WireGuard protocol, too – it's super-secure and far more lightweight, although OpenVPN remains my protocol of choice when it comes to maximizing my digital privacy. Users should also take note of PIA's kill-switch, which cuts the internet connection if the VPN connection drops. PIA is a zero-logs service, which is great news for user privacy, although I would like to see the service invest in a third party audit of its logging claims. This would provide users with clear evidence that their identifiable information isn't being squirreled away somewhere, although the VPN has previously proven this zero-logs claim on two occasions in court! When pressured by authorities, PIA simply had no customer information to hand over, which cements its reputation as a truly security-oriented service in my book. Advanced users will enjoy being able to customize and tweak PIA's security settings – and I like that the service allows us to do this, as it makes it much easier to tailor your VPN setup depending on what you'd like to do online. New VPN users can simply install the app, connect to a server, and unblock Facebook in a blink, however, without needing to take a deep dive into the settings. And, as a bonus, users can play with up to Unlimited

simultaneous connections! If you need some extra help, or want to figure out which server is best for unblocking Facebook (or Netflix), then don't hesitate to reach out to the PIA customer service team. They're around 24/7, and I've always been pleased with both the speed and detail of their responses. And, as you'd expect from a premium provider, PIA offers a 30-day money-back guarantee, and even a 7-day free trial for iOS and Android users. PrivateVPN is the best user-friendly Facebook VPN. Its iOS and Android apps are intuitive, install instantly, and come with a 30-day money-back guarantee. PrivateVPN Demo PrivateVPN is a relative newcomer to the VPN scene, but one that's already impressed us here, and continues to accrue a loyal user base. It's not hard to see why, either. PrivateVPN effortlessly unblocks social media sites like Facebook, no matter where you are, and is especially good at providing access to a wide range of Netflix libraries. Admittedly, PrivateVPN has fewer servers than the other providers mentioned in this article – but don't let that deter you. These servers are well placed (in 63 countries

countries) and quick, and the provider itself is still young with plenty of growing left to do. I especially like how easy it is to geo-hop with PrivateVPN, thanks to its friendly apps that allow users to sort servers by speed or location. I'm always seriously impressed by just how many international Netflix catalogues PrivateVPN can unblock. The VPN has its very own collection of dynamic dedicated IP addresses that can be used for free to browse an endless library of content from around the globe! Plus, customers will also be able to unblock other popular services, like Amazon Prime and Hulu. This unblocking power, and the fact that PrivateVPN can more than handle HD streaming (and other data-intensive tasks), is part of the reason I constantly come back to the service when I want to catch up on new TV shows from across the pond or exclusive originals. Battled-tested OpenVPN will prevent snoopers from pawing through your identifiable data – and that's just the sort of security measure you'd expect from a service with "private" in its name! PrivateVPN can ensure that you don't leave a digital footprint across the web, and even has a handy Stealth VPN feature which can bypass VPN blocks imposed by restrictive governments or workplaces. Torrenters will also be glad to learn that PrivateVPN offers port forwarding as well as IPv6 and DNS leak protection, as well as its kill-switch. The kill-switch will jump into action if your VPN connection drops, cutting your internet in order to prevent your original IP leaking back to your ISP and tipping them off about your activity. However, PrivateVPN is a zero-logs provider with a robust policy, and I think the service would benefit hugely from inviting an auditing firm to perform an independent audit – after all, plenty of other VPNs have done the same to prove their commitment to user privacy. PrivateVPN is the service I recommend to total VPN newcomers more often than not. This is because it takes just a few clicks to install, is incredibly easy to use, and features clutter-free apps that have a friendly feel. Windows, Mac, Android, iOS, and even Linux users will be able to enjoy PrivateVPN's features, as well as up to 10

simultaneous connections. This is pretty generous, and makes PrivateVPN a good option for families who want to unblock Facebook on their own devices under the same roof. The PrivateVPN support team is composed of in-house developers who don't shy away from tough questions – and they're available 24/7. All in all, it's well worth testing PrivateVPN for yourself by making good use of its 30-day money-back guarantee, or its free 7-day trial! 1. ExpressVPN

Pricing

Pros

Cons

Available for

Unblocks

Server locations

Stealth servers available?

Website

Always fast, always reliable

Proven security

Form and function

Pricing

Pros

Cons

Available for

Unblocks

Server locations

Stealth servers available?

Website

Servers where you need them

Packed with privacy features

A modern take

Pricing

Pros

Cons

Available for

Unblocks

Server locations

Stealth servers available?

Website

Spoiled for choice

A full security toolkit

No more limits

Pricing

Pros

Cons

Available for

Unblocks

Server locations

Stealth servers available?

Website

Focused on the States

Security oriented

Customization galore

Pricing

Pros

Cons

Available for

Unblocks

Server locations

Stealth servers available?

Website

Your key to content

Private by name and nature

The best for beginners

How will a VPN unblock Facebook at school?

A VPN encrypts all of your online activity and changes your IP address so no snooping parties can see what you get up to online. This allows you to do two things: firstly, a VPN service lets you pretend to be in a different country by switching your IP address for one managed by the VPN provider, much like a proxy service (but more secure). Doing this will give you the ability to access Facebook (and Facebook Messenger) even when it's blocked on your Wi-Fi network.

Secondly, a VPN encrypts the data going to and from your device. This is why they are more secure and reliable than proxy services. This stops Internet Service Providers and local network administrators from being able to monitor what you are doing online.

It is worth noting that more and more institutions are wising up to VPNs, and it is increasingly common to find VPNs blocked. Please check out our How to Bypass VPN Blocks guide for information on getting around these blocks on your school network.

How to use a VPN to unblock Facebook at school

To unblock Facebook at school or work, just follow these simple steps:

- Select a VPN for Facebook – we have listed five above.

- Log onto your VPN.

- Turn on the kill-switch and DNS leak protection – A kill-switch is a security feature that kills your internet connection if your VPN stops working. Working alongside DNS leak protection, both features combined will stop anyone finding out you used Facebook.

- Configure your encryption settings – By default Windows and Android services will use OpenVPN and Mac and iOS will either use IKEV2 or WireGuard as default. These are the most secure protocols.

- Connect to a VPN server – We recommend that you connect one closest to you (if possible) as this is usually the fastest server.

- Head over to Facebook – You will now be able to access Facebook! If your VPN stops working, then try another server.

Can I use a free Facebook VPN?

There are plenty of Free VPN services, and they do have their uses. However, if you're looking to access Facebook on a restrictive network or in a country where it is banned, then using a free VPN can be dangerous. Most free VPN services monitor and sell on the information of users to third parties. We all know that when a service is offered for free, then you are the product (which defeats the entire purpose of using a VPN).

On top of this, free VPNs rarely have sufficient encryption or security features to properly protect you online or to bypass restrictive networks and government firewalls – so they may not even work. Add to this the data and speed restrictions that come with free VPN services, and you have a service that will never be as good as a quality, paid VPN service. Remember, a good VPN doesn't have to be expensive, and if you want to protect your privacy, then a paid VPN is the best way forward. If you want a low-cost, high-security VPN, we recommend Private Internet Access. If that doesn't fit your needs, check out our other recommendations for budget-friendly VPNs.

Will a VPN stop Facebook from tracking my location?

A VPN is a great way to hide your IP address and cloak your internet traffic. However, Facebook can still track your true location outside of your IP address through device-level GPS services and tracking cookies. But don't worry, there are ways to get around this:

Preventing tracking on a computer

If you're accessing Facebook via a desktop or laptop computer, then there are a couple of things that you need to do before you can access Facebook without revealing your true location:

- Launch your VPN and choose an appropriate server.

- Launch your browser and clear your cookies and cache.

- Disable geolocation.

- Open a new tab in incognito/private browsing mode.

- Log in to Facebook in that private tab.

It's important to launch your browser in incognito mode as this prevents identifying cookies.

Without incognito: SPOTTED!

With incognito: HIDDEN!

Preventing tracking on a mobile device

Using Facebook on a mobile device requires a slightly different approach (as most smartphones and tablets have built-in GPS capabilities). If you want to access Facebook securely on a mobile device without it tracking your true location:

- Uninstall/Disable the Facebook app.

- Turn off location settings (GPS tracking) for Facebook in your device settings

- Turn off location settings for Google and/or your browser in your device settings.

- Launch your VPN and connect to an appropriate server.

- Launch your browser and delete your cache and cookies.

- Open a new tab in incognito/private browsing mode.

- Log in to Facebook.

Access Facebook if it is blocked in your country

Some VPNs are great for unblocking streaming content online. However, they may not be considered secure enough to protect you in a country where Facebook is blocked. That is why it is vitally important to select a VPN with the following privacy features:

- DNS leak protection – This stops you from leaking DNS requests to your ISP. A DNS leak could reveal that you used Facebook, so it's always a good idea to turn on DNS leak protection.

- Kill-switch – this feature stops you from leaking any traffic to the ISP that could reveal you accessed Facebook.

- Auto-connect – This feature re-connects the VPN if the connection drops out.

- Stealth mode or "cloaking" – This conceals VPN use and allows people to use VPNs in places that block VPN connections.

Where is Facebook Blocked?

Many governments block Facebook because it facilitates the discussion of dissenting views and exposes citizens to different ideologies from around the world. Some governments see Facebook as a threat to their power. The following countries censor access to Facebook (please note that this information is subject to change):

- China – Facebook is completely blocked in mainland China. That said, there are an estimated half a million Facebook users in China. For more details on bypassing Chinese censorship, check out our Best VPN for China guide.

- Iran – The Iranian government uses specially trained "cyber-police units" tasked with tracking down visitors to banned websites, including Facebook. It has been reported that Iranians use local VPN providers to hide their online activities, however, many of these VPNs appear to be government-run honeypots. People caught trying to evade the government’s bans face arrest, interrogation, torture, jail, and even death.

- North Korea – In a world full of oppressive regimes, North Korea is the most repressive of all. The vast majority of the population has no internet access at all. China supplies some broadband lines to North Korea, so a few selected government and party officials probably have limited access to the internet. It is very unlikely, however, that these are permitted to use this access to peruse Facebook.

- Syria – Facebook is blocked in many cities in Syria due to both the government and ISIS.

- Vietnam – Facebook is unofficially banned, but appears to be widely available to home users. Many hotels and internet cafes block access to Facebook.

- Russia – Since the invasion of Ukraine, Russia has begun banning foreign media outlets, including social media (in an attempt to replicate China's Great Firewall).

Unblock Facebook Messenger at school

Facebook Messenger, like Facebook itself, is blocked by a lot of schools and colleges. Fortunately, the solution is the same, you can use a VPN to unblock Facebook messenger at school. All the services listed above will work to unblock the service.

The service is also blocked in some countries. China, Iran, and North Korea have a permanent ban on Facebook and Messenger. However, many other countries, including Bangladesh, Syria, Egypt, and Turkey, sometimes blackout social media sites and messengers – usually during politically sensitive events such as protests. Saudi Arabia has a permanent ban on Facebook Messenger, but not on Facebook. That ban is to protect the income of the Saudi state-owned telecoms firm.

Conclusion

It's important to weigh up each VPN provider with how they meet your own needs. Whether you're looking for low-cost, robust stealth functions, overall performance, or just plenty of servers; picking the right VPN can save you hassle in the future. Here's a quick reminder of our top choices for Facebook VPNs:

FAQs