With a VPN, UK citizens can protect their online privacy when surfing the web and unblock content from anywhere in the world.

The UK has fast become one of the most snooped-on places in the world due to the Investigatory Power Act (A.K.A. the Snoopers Charter). Thankfully, with a VPN, UK citizens can protect their online privacy when surfing the web and stop the snoopers in their tracks.

What's more, a VPN can also unblock content that isn't available in the United Kingdom, such as Hulu or DAZN. VPNs can also provide you with a UK IP address so that if you're located outside of the UK, or you just go on holiday to another country, you can watch BBC iPlayer outside the UK, as well as other British TV channels.

If this has piqued your interest, read on to find out what the best UK VPN services are.

WANT TO TRY OUR BEST RATED VPN RISK FREE?

ExpressVPN offers a risk-free 30-day trial if you sign up here. You can use our #1 rated with no limitations for an entire month.

There are no strings attached—just contact support within 30 days if you decide ExpressVPN isn't right for you and you'll get a full refund, no questions asked.

What are the best VPNs for the UK in 2024?

Our testing found these to be the best VPNs for the UK in 2024:

- ExpressVPN - The best VPN for the UK. ExpressVPN is fast and secure making it perfect for torrenting, streaming & gaming.

- NordVPN - A great VPN pick for the UK regardless of what device you use. NordVPN works great on all platforms, including iOS and Android.

- CyberGhost VPN - A UK VPN that is packed with value. You get 7 simultaneous connections and slick mobile apps for Android & iOS.

- Surfshark - A cheap VPN for the UK on our list. From $2.49 a month, you can unblock US Netflix and BBC iPlayer on all devices, including smart TVs.

- VyprVPN - A great all-round VPN choice for the UK. It has proprietary servers with fast speeds & improved levels of privacy.

Try our #1 VPN pick ExpressVPN Today!

See the full list and analysis

How we found the best UK VPN services

We have been reviewing VPN services since 2013 and in that time we have tested 150+ VPNs, so we know our stuff. Our researchers test each service themselves and write a summary of the service based on their experience. When putting together our list of the best VPNs to use in the UK, we ask the following questions:

- How secure is the VPN?

- Does it protect your privacy?

- What speeds does the VPN offer?

- How many servers does it have?

- What can it unblock?

- Is it good value for money?

What is a VPN?

You might've previously heard the term "Virtual Private Network" without knowing exactly what it means... or what it is! And you might still be wondering, even after reading this article. Essentially, a VPN is a service you can use on your PC, laptop, or phone (amongst other devices) to protect your digital privacy and access restricted sites and services on the web.

A VPN creates an encrypted tunnel between your device and the VPN server, and acts as your gateway to the internet. All data that flows through this tunnel is securely encrypted – meaning nobody, not even your ISP, can take a peek at it.

As a result, you'll be able to browse the web with privacy! And because a VPN can assign you a new, temporary IP addressed based in the location of the server you connect to, it's totally possible to check out what's new on Netflix US, even if you live on the other side of the world! Of course, being able to access restricted sites could be that much more important if you're living in a country where an oppressive government is doing the restricting.

Looking for more?

If you're interested in learning more about what a VPN can do and how they do it, check out our beginner's guide to VPNs for a jargon-free explanation

The 10 best VPNs to use in the UK in 2024

Here's a full list of the VPNs we recommend for the UK today:

Best VPN for the UK: ExpressVPN is our #1 pick. A premium service, with a premium price tag. It has been the leading VPN option in terms of speed, privacy and unblocking for years. ExpressVPN Demo ExpressVPN is a veteran in the VPN industry and it has acquired a great reputation over the years. It has three server locations in the UK, all in the south of England. I really like that ExpressVPN strikes an outstanding balance between flashy features and security. You can connect to the VPN with one click, it offers a variety of accessible features from the home screen, and the servers are exceedingly fast. ExpressVPN is more expensive than the other services on this list, so if the cost of the service is one of your main concerns then you may want to consider another option. However, ExpressVPN is tough to beat. I use ExpressVPN on a daily basis because of the quality of the app. With this service, you can use it on 5 devices simultaneously which is pretty handy as I was able to install the VPN on my phone, laptop, and tablet. As always, I can't praise ExpressVPN's speeds enough. ExpressVPN has servers in 105 countries

, and all those servers are lightning-fast. I was able to use this service for gaming, HD streaming, and other data-intensive tasks like video conferencing and torrenting without seeing any impact on my connection speeds. We run VPN speed tests every day and ExpressVPN is consistently one of the fastest services we test. ExpressVPN is a zero-logs VPN, which means that they don't keep track of what you do online. They implement OpenVPN on all platforms which is great to see as this is the gold standard of encryption. They also provide users with robust AES-256 encryption, which means it will keep your data secure and away from prying eyes – so you can connect to public Wi-Fi with confidence and peace-of-mind. On top of that, ExpressVPN offers excellent security features, including a kill-switch, DNS leak protection, and obfuscated servers (that hide the fact you're using a VPN). That means it has all the most premium VPN features you are likely to need. Checking out UK content like BBC iPlayer, Channel 4, and Netflix is a breeze thanks to national server locations and ExpressVPN's robust AES-256 encryption. I was able to access Netflix US and Amazon Prime from the UK in HD without any buffering. If you want to test the best service money can buy, take advantage of its 30-day money-back guarantee and get maximum protection without risking your cash. NordVPN is a versatile VPN for the UK. It offers great apps for all devices, including smartphones, and it works great when streaming, unblocking, torrenting and gaming. Nord Demo NordVPN is a well-established service that has a lot of advanced privacy features. I think this VPN is ideal for anybody living in the UK thanks to its choice of fast British servers which are based in London. When testing NordVPN, I found it gets around geo-blocks really well. NordVPN is great for accessing – BBC iPlayer, All4, NowTV, British internet banking sites, or virtually any other geo-restricted UK content you can think of. It also has servers in the USA that unblock Netflix US, Hulu, YouTube TV, HBO Max, and much more great content. This makes NordVPN an excellent all-rounder. NordVPN has great apps for Android, iOS, Windows, and Mac. I particularly liked their CyberSec feature which is malware and ad-blocker. It blocks potentially dangerous websites by blocking DNS requests based on a real-time blocklist of harmful websites. This feature is available on desktop and mobile apps which is great. This VPN also offers split tunneling which is handy. Using NordVPN's split tunneling tool, I set it so it stays on for certain sites that have geo-restrictions – like Netflix US – but then off for other sites I wanted to access without a VPN like online banking sites or work-related resources. NordVPN has two Mac apps which is slightly confusing. The older OpenVPN client has all of these features on it, but I detected a leak when testing it. The IKEv2 client (the second client) has fewer features on it, however, I found this one didn't leak my IP address. NordVPN is a no-logs service based in Panama, which makes it great for gaining online privacy. And this VPN has the features you need to torrent safely – or to access government censored services without being tracked by your ISP or the British government. Add to that a 6-device limit, and you can trial the service using its 30-day money-back guarantee. A lightning-fast VPN that is well worth taking for a test run. CyberGhost offers great value if you want a VPN for the UK. It's super easy to use on Windows, Mac, Android, & iOS and it's packed full of features to make your life easier. CyberGhost Demo CyberGhost VPN is an excellent service at an affordable price, especially if you opt for a long-term subscription. This VPN has three UK server locations, which are located in – Berkshire, Manchester, and London. It offers good security features and unblocks streaming services like iPlayer, Netflix and more. However, this service doesn't offer obfuscated (stealth) servers, so if this is a must-have then you may want to consider ExpressVPN. CyberGhost is a zero-logs service, and it implements OpenVPN encryption, which means that your ISP will never be able to track what you do online on behalf of the government. If you're new to VPN services, this offers everything you need to improve your online security and at an affordable price. CyberGhost has servers in 100 countries

, and it provides access to sought-after streaming services such as Netflix US, Hulu, Amazon Prime, ITV Hub, and BBC iPlayer. However, compared to others on this list, it can be a little temperamental at times. I have tested a lot of VPNs for the UK whilst away from the country, and CyberGhost is a real pack leader. Having plenty of servers in the UK is great – but I found the servers that are specifically optimized for BBC iPlayer and Netflix UK particularly useful. These can be found easily using the streaming tab in the app or you can search for the streaming service you want to unblock in the search bar and it will return the optimized server for that service. When I've traveled halfway around the world, for work or pleasure and just want to watch some good ol' British comedy, CyberGhost has always delivered. The software is no-frills and incredibly easy to use, making it an ideal option for beginners who do not need a lot of techy features. You can use CyberGhost VPN on any device, and the VPN is extremely generous; permitting users to install and use it on up to 7 devices simultaneously. What's more, you can test this VPN risk-free thanks to its whopping 45-day money-back guarantee – which is more than any other leading provider. Surfshark is a cheap VPN for getting a UK IP address. This is a new service, but it has quickly made a name for itself thanks to its solid speeds and unblocking capabilities. Surfshark Demo SurfShark is the cheapest VPN on this list, especially when opting for a long subscription. They have 3 UK server locations, these are based in London, Manchester, and Glasgow. I was impressed by the premium features offered by this service these are bound to be useful to a wide range of VPN users. These include a full Smart DNS service, static IP addresses, DNS ad-blocking, MultiHop VPN, and more. If you're a Mac user, you should know they do not offer OpenVPN encryption in their MacOS apps. Instead, they offer IKEv2 encryption. This is still a robust and secure encryption protocol, but OpenVPN is still preferable. Surfshark offers three handy servers in the UK, providing non-Brits with a UK IP address that'll successfully unblock the world-famous BBC iPlayer. I am an American expat living in Hungary and I am a huge fan of British TV shows, so I often fire up Surfshark and use it to unblock shows on iPlayer, ITV, and Channel 4. Native Brits can make use of thousands of servers across 100 countries

– and the ability to unblock the full US Netflix catalog. Streamers will also appreciate the included smart DNS service, which allows you to unblock a range of services on devices such as games consoles and smart TVs that don’t allow you to install a VPN client. Don't just take our word for it, hop into the service with the assurance of a 30-day money-back guarantee. Pretty much every server I connected to was super quick; and because I use a lot of different devices, I loved how I can connect all of them at once with Surfshark. The apps are great no matter which device I used, be it my Mac or Windows laptop, my Samsung tablet running Android, or my iPhone. I even run it on my smart TV and PS5 to stream content on iPlayer and Netflix. Surfshark has a smart DNS service which makes it easy to set up on PS5 and smart TVs. They have some handy guides on their website showing you exactly how to do this. VyprVPN is a great all-round UK-friendly VPN. You get a balance of privacy and speed thanks to its excellent privacy features & proprietary network of servers. VYPR Demo VyprVPN is a fantastic service with UK servers located in London and servers in 64 countries

overall. Their UK servers are ideal for unblocking content from the UK. Whenever I have taken VyprVPN out for a spin, I never had any problems connecting to UK servers and streaming geo-restricted content like BBC iPlayer. It was also able to unblock other popular UK services – Channel 4 OD, and UK Netflix – and with minimal buffering. I found this quite useful on holiday, especially if I'm midway through a show and U want to continue watching in the evenings. I was also able to bypass geo-blocks to access US Netflix, restricted YouTube videos. I think this VPN is easy to use, and it has excellent apps for all platforms. The recently updated version of VyprVPN (3.0) adds even more control to an already exceptional platform. VyprVPN changed the design of the app, which I think makes it much more enjoyable. VyprVPN said they listened to customer feedback before making these changes, which is great to hear and shows they value their users. All of VyprVPN’s UK servers are owned and managed by VyprVPN. They are the only VPN provider that I know of that does this, and it's a big plus in terms of security. Where privacy is concerned, this VPN is strong. Not only does VyprVPN provide well-implemented OpenVPN encryption, but it also has market-leading VPN features such as DNS leak protection, obfuscated servers (Chameleon), and a kill-switch. What's more, this VPN recently underwent a third-party audit of both its software and infrastructure, meaning that you can trust it to works as advertised. For anybody who is new to VPNs or is having setup or connection problems, VyprVPN has fantastic 24/7 live chat support to help you out. They also have an active community forum which is handy for getting any issues you may have resolved. This VPN's 5 simultaneous connection allowance means you can use it across plenty of devices at the same time. Finally, you can test this VPN risk-free thanks to its 30-day money-back guarantee. IPVanish is a great VPN for the UK that's easy to use. It's also fast, reliable and works well with US Netflix thanks to its fast server network that spans the globe. IPVanish Demo IPVanish has three server locations in the United Kingdom, these are based in London, Glasgow, and Birmingham. I found it to be fast, and it has plenty of useful features. Whenever I fire up IPVanish, I am always amazed at all the things it can do and how easy it is to use. The apps are neat and the features that you need to find are very easy to get to making it super slick on all devices. I love that it shows the ping times of the server you are connected to as it makes it easy to find a quick server. It has lots of useful features too including – a kill-switch, DNS leak protection, OpenVPN encryption, and obfuscation. This makes it ideal for gaining digital privacy from your ISP and the government. It also means it is good for watching streams in privacy, or for unblocking content at work or on public Wi-Fi. I found that the connection speeds are incredible. We regularly test VPN speeds at ProPrivacy and this service is always near the top of the list. You can also see this in the app as you're not waiting around for a few seconds before you have connected to the server. I enjoy using this VPN on all platforms and are always happy with the connection speeds I get even when on 4G. Well worth testing using its 30-day money-back guarantee. The security offered is top-notch, it has DNS leak protection, an independently audited no-logs policy, and very well-implemented encryption protocols. Overall, IPVanish is a real dream to use whether I was at home on my laptop or using public Wi-Fi when traveling into the office. PIA is one of the most secure VPNs to use in the UK. It is serious about privacy, from a no-logs policy to excellent levels of encryption & great privacy features. PIA Demo Private Internet Access (PIA) runs UK VPN servers in London, Manchester, and Southampton – making it a great option for anyone needing a UK IP address. These servers will let you access UK internet banking and other locally geo-restricted services. That said, I tested it with BBC iPlayer on both the website and on Kodi, and PIA wasn't able to unblock it, unfortunately. This is a service that really lives up to its name as being private and secure. Brits wishing to avoid government surveillance will appreciate the fact that this privacy-friendly company runs servers in 84 countries

around the world and has the almost unique distinction of having its no-logs claims proven in front of a court of law – not just once, but twice. Yes, this provider was ordered to hand over logs by a US court and it didn't have anything to hand over. I used the VPN for gaining privacy both at home and on public Wi-Fi and was happy with the stability and speeds it provided. I really liked that the VPN offers strong OpenVPN and WireGuard to Mac users. Private Internet Access also has a Linux GUI client which is quite rare. If you're a Linux user, then this is the best service for you. If, like me, you are a torrenter, you will be happy with the advanced features this VPN provides. I used port forwarding to ensure that I could communicate with as many peers as possible to get the fastest download speeds. Considering the low cost, PIA is pretty stacked – because you also get split-tunneling, and DNS-based ad and malware blocking, which is an extremely useful addition. While PIA offers a lot of customizability, I was happy to see that it comes set to OpenVPN by default, so it is ready to use straight out of the box (no need for VPN beginners to stress). A great service that is worth trialing using its 30-day money-back guarantee! Ivacy is the best cheap VPN for the UK. From $1.50 per month you get a secure service with decent speeds & solid unblocking capabilities across more than 100 countries. Ivacy Demo Ivacy is a VPN that is consistently rated highly by users. It has two UK server locations which are Maidenhead and London. The VPN is cheap considering that it is a no-logs service with apps for all platforms, and it has everything you need to gain digital privacy in the UK. I enjoyed using all of Ivacy's apps, and I particularly like that it has advanced features like a kill-switch and DNS leak protection, which makes it suitable for torrenting. You can connect to servers in over 69 countries

worldwide, and they're perfect for unblocking streams, game servers, and anything else you can think of. I used Ivacy to access Netflix US and found it to work excellently. Admittedly, this VPN isn't quite as fast as some of our other recommendations. But it does cope well with HD and downloading, and I consider it fast enough for the vast majority of people's needs. For those who require port forwarding, this is available as a bolt-on feature but not included in the standard subscription and you can also get a static IP if you need one (at a small extra cost). This is an excellent VPN for people in the UK that want to avoid government snooping. Well worth comparing to our other options using its money-back guarantee. ProtonVPN is a trusted VPN for the UK from the makers of ProtonMail. It's reliable and secure, but it also packs a punch for any streaming fans as well. A solid all round choice. Proton Demo ProtonVPN has plenty of servers in the UK. Proton has only been around a handful of years, however, in that short time it has become a consumer favorite service thanks to its free plan and its advanced privacy settings. This VPN is based in Switzerland; a location that is known to be great for digital privacy. It is also a no-logs service, which means you can trust it to always provide privacy for everything you do online. The provider has such a strong and transparent commitment to digital privacy that I think it's well worth a look. I am constantly impressed by the sheer number of UK servers that ProtonVPN has on offer (though you'll get access to more if you're a premium user). The only downside is that I found ProtonVPN to be a bit slower than the other names in this list. They offer free users a 7 day trial of their premium service too, which is great. I particularly like that ProtonVPN is open-source, and that it publishes all of its professional audit results. This VPN has apps for all platforms, which means that UK residents can use it both on their home computers, at work, or when using mobile devices on public Wi-Fi. ProtonVPN has servers in 91 countries

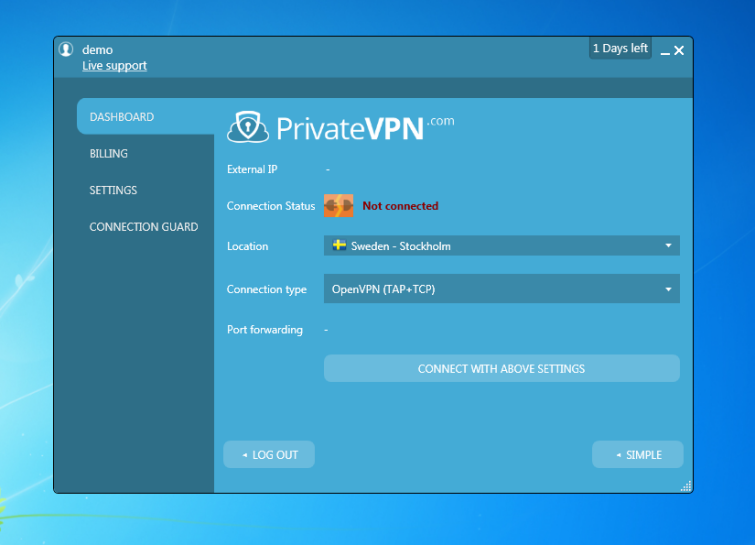

in total – making it ideal for bypassing all manner of geo-restrictions. It also permits torrenting. Admittedly, ProtonVPN isn't as fast as some of the other services I have recommended. However, it always lets us stream Netflix US without buffering, and it's well worth taking for a test-run using its 30-day money-back guarantee. PrivateVPN is a great streaming VPN for the UK. This reliable VPN from Sweden can unblock more Netflix catalogs than just about anyone else and can stream in HD. PrivateVPN Demo PrivateVPN has servers located in London and Manchester. I found this VPN is perfect for people living in the UK that want a service for streaming. PrivateVPN provides excellent speeds, and it is constantly being praised by its user due to its ease of use and because it unblocks iPlayer, Netflix, and more. I was able to unblock both iPlayer and Netflix US with PrivateVPN which was great. PrivateVPN can unblock, not only US Netflix, but a large range of other Netflix catalogs – and it also works with Channel 4, ITV, and many other streaming services around the world. PrivateVPN has a zero-logs policy and is based in Sweden, which offers outstanding privacy laws. With PrivateVPN's dedicated stealth servers and kill-switch, you can protect your data privacy from your ISP and the British government without the fear of being discovered. Best of all, it's super cheap, too! I used it on Android, iOS, and Windows and found it to be easy to use on all platforms. What's more, you can connect to it with six devices simultaneously which I found to be more than enough to use it both at home and on public Wi-Fi. Like the other VPNs in this guide, you can test it using its 30-day money-back guarantee. Perhaps the only drawback of this VPN is that it is not as fast as the other VPNs I have recommended. That said, it never had any trouble buffering. 1. ExpressVPN

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Consistently fast

Online security made easy

Unblock your favorite content!

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Unblock British content

Apps packed with advanced features

Strong encryption

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Cheap and secure

Unblock more!

An easy-to-use service

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Lots of features

Unblock British and foreign content

Surf the web in style

5. VyprVPN

www.vyprvpn.com

www.vyprvpn.com

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Unblock UK streaming platforms!

Fully-customizable

An easy way to improve online security

Good customer support

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Great apps for all popular platforms

Servers built for rapid downloads

Independently audited means it does what it says on the tin

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Lives up to the name – it's great for privacy

One of the best VPNs for torrenting

Packed full of features

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

Lots of features

Unblock more content

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Great for privacy

Lots of secure servers

Great apps and a free option

Pricing

Pros

Cons

Servers in UK

Unblocks

Available for

Torrenting

Encryption

Website

One of our favorite streaming VPNs

Privacy by design

Apps for the most popular platforms

Our methodology – how do we test VPNs?

We're serious about transparency here at ProPrivacy, and to make sure we're only reporting the most accurate and up-to-date info, we constantly test and trial the VPNs we recommend.

There are tons of VPNs on the market, after all, and not all of them are secure... or even functional.

Our writers each trialed several VPNs, including household names, promising newcomers, and smaller services – so you can rest assured that our reviews are all bespoke, relevant, and reflect our actual experience with the product. But, in order for a VPN to claim a spot in our list of the best VPNs for the UK, it has to be put through a series of comprehensive tests.

First and foremost, we made sure that the VPNs all had a selection of servers within the UK – and that they were speedy enough to handle HD streams! We have our very own speed methodology process to measure this, and we also made good use of our leak testing tool to ensure that the VPNs weren't putting user data at risk.

We always comb through privacy policies, check out jurisdictions, and look for no-logs policies – three massively important privacy factors. Encryption is just as important, however, and we make sure to examine how these VPNs implement their encryption standards, as well as the type of encryption they offer. If users also get a kill-switch or obfuscated (stealth) servers, that's a bonus in our eyes!

Next, we'll check out which streaming services the VPN can unblock. Unfortunately, more and more sites are blocking VPN IPs, so it's important that we let you know, before you buy, whether the service in question can actually unblock Netflix US or HBO Max, for example. Our final consideration is price, seeing as you don't have to break the bank to get a reliable, secure VPN – there are some awesome budget-friendly alternatives out there.

To be completely transparent in the table below we have included some of the features that we compare when we put together this list. This will allow you to compare the key features of these services and allow you to choose the best VPN for your needs.

| No Value | ExpressVPN | NordVPN | CyberGhost VPN | Surfshark | VyprVPN |

|---|---|---|---|---|---|

| ProPrivacy.com SpeedTest (average) | 100 Mbps | 85.9 Mbps | 63.92 Mbps | 41.0 Mbps | 20.9 Mbps |

| Netflix | |||||

| iPlayer | |||||

| Amazon Prime | |||||

| IPv4/6 leak detected | |||||

| Independently audited? | |||||

| Jurisdiction | British Virgin Islands | Panama | Romania | British Virgin Islands | Switzerland |

| Logs aggregated or anonymized data | |||||

| Obfuscation (stealth) |

What are the fastest UK VPNs?

To do data intensive tasks like gaming, streaming, and torrenting, you will need a super-fast VPN. Not all VPNs provide the speeds you require, which is why it is a good idea to stick to our recommendations. The table below provides the results of our regular speed tests, so that you can see how VPNs are performing in real-time. As a result, you can choose to subscribe to the fastest VPN for the UK if you want to.

| ExpressVPN | NordVPN | CyberGhost VPN | |

|---|---|---|---|

| ProPrivacy.com SpeedTest (average) | 100 | 85.9 | 63.92 |

| Speed | 100 | 568.0 | 556.2 |

| Performance | 10 | 9 | 7 |

| Reliability | 9 | 8 | 7 |

Will a VPN improve my speeds?

A VPN will not improve internet speeds most of the time. This is because your connection speed revolves around your contract with your ISP. Even the fastest VPNs must encrypt your traffic and route it further (via the VPN server) which will usually incus some latency.

That said, a VPN can prevent your ISP from engaging in bandwidth throttling when you perform data intensive tasks such as gaming or downloading torrents. If you believe your ISP is throttling you, it is definitely worth trying a VPN to see if it improves your speeds.

However, if you have naturally slow broadband speeds, using a VPN will not help improve those speeds.

Why you should use a VPN in the UK?

A VPN is a privacy tool designed to prevent local networks, ISPs, government agencies, and websites from tracking you online. By connecting to a VPN you can use the internet anywhere without concern that someone is monitoring your activities.

In addition, a VPN conceals your real IP address from the services you visit, which permits you to pretend to be in a different location. This lets you access geo-restricted content and gives you the freedom to access any websites that have been blocked by ISPs.

To help summarize, we have listed some of the benefits of using a VPN in the UK below:

Your ISP cannot see any of your data

In the UK, ISPs must harvest web history data and metadata about UK citizens, which is then shared with the Government, HMRC, and around 50 other government agencies due to the Investigatory Powers Bill.

In addition, ISPs are ordered to restrict access to certain websites by the government, like torrent sites for instance. By using a VPN in the UK, you can prevent surveillance and bypass that annoying blocks imposed by your internet service provider.

A VPN encrypts your data, which stops your Internet Service Provider (ISP) from tracking your entire web history and communications metadata on behalf of the government, HMRC. The VPN server acts as a proxy sitting between your ISP and the internet which your ISP cannot see beyond.

Unblock UK streaming services, or streaming sites blocked in the UK

One of the most useful functions of a VPN is to provide access to geo-restricted content from all over the world. As we've already mentioned, a VPN lets you connect to servers in any country around the world, so the possibilities are endless.

Streaming with VPNs outside the UK

If you are a UK expat currently living abroad, or if you are just living outside the UK and want to access BBC iPlayer, ITVhub or one of the other UK based streaming services, then a UK VPN can help.

To do this, all you need to do is connect to a VPN server in the UK, so any of the VPN recommendations we have made above will do the trick. Once you have connected to a UK server, you can browse to your favorite streaming service and start watching!

Unblocking and streaming inside the UK

If you live in the UK, you can use a VPN to pretend to be in another country to access content that is only available in another country, such as Hulu, HBO GO, ESPN to name a few. Another common use case by UK citizens is to use a UK VPN for US Netflix to unlock the exclusive titles available to US citizens on Netflix.

Using a VPN to combat UK government surveillance

The UK is one of the most surveillance-heavy nations in the world. GCHQ is the government’s intelligence agency and it constantly monitors and snoops on everybody.

In addition, the government uses the Investigatory Powers Act 2016 to capture everybody’s data and web browsing histories from ISPs. In the UK, surveillance has become thoroughly widespread and completely pervasive, and the only way to stop your entire online presence from being tracked is with a VPN.

If you value your privacy and the privacy of your loved ones, using a VPN in the UK is highly recommended. If you don't use a VPN, your ISP will collect the following data about you and store it for 12 months:

- Your IP address

- Your personal details (address, payment details, etc.)

- A log of every website and the time you visited it

- Your communications metadata (emails, messages, etc)

Surveillance in the UK is intense – and Brexit will likely only exacerbate matters. So be sure to prepare by investing in a secure VPN.

Hackers can’t snoop on what you do online

Wi-Fi hotspots are popular targets for hackers. These cybercriminals will attempt to intercept your data and steal your credit card information and other sensitive personal data if you're not protected by a VPN.

If you're new to VPNs, we recommend getting up to speed with our helpful guides:

CyberGhost VPN Discount Coupon

CyberGhost VPN Discount Coupon Internet privacy in the UK post-Brexit

Most experts agree that Brexit is going to give the government the power it needs to begin using the Snoopers Charter to its full extent. As a result, it is likely that Brexit will cause higher levels of internet surveillance.

Until now, the Snoopers Charter was barely enforced because it was found to contradict European regulations. With the EU no longer standing in its way, many experts fear things will go downhill rapidly.

With the chances of GCHQ snooping on your data higher than ever, and ISPs collecting all your metadata and web browsing history records for 12 months, there has never been a better time to protect your digital footprint.

A VPN will encrypt your traffic, making it harder for the government to track everything you do, which is your right as a citizen who voted them in to protect you –not snoop on you.

How much will a VPN cost you?

The average cost of a decent VPN in the UK is around $5 USD (around £3-4 per month). However, this can vary a lot depending on the provider, if you want any additional features like a static IP, and how long you are willing to commit for.

VPN subscriptions are usually offered over one month, a year, or multiple years, and the longer that you sign up for the less you will pay per month for the service. For example, the cost of a one month subscription can be as high as $12 per month, but if you are willing to sign up for 2-years you can see this monthly cost reduced by as much as 80%.

Monthly VPN subscriptions in the UK

If you are looking for a monthly VPN subscription, it is still possible to get a good deal. Just be aware that the real savings are in the longer subscription packages.

Cheapest monthly VPN: Proton VPN

Based on our recommended list above, the cheapest monthly VPN is Proton at $5 per month (around £3-4). This is a very attractive price if you are looking to keep costs down. For this, you get access to the "basic package" which is suitable for everyday browsing and keeping you secure online. However, if you want the extra perks, like unblocking US Netflix in the UK, you may want to consider spending a little more.

Get Proton VPN Monthly Plan >>

Best value monthly VPN: PrivateVPN

Coming in at $7.12, PrivateVPN is incredible value-for-money. Not only do you get online privacy and security, but this service is a real demon when it comes to streaming and unblocking. We pitted PrivateVPN against all the major streaming services, are rarely does it fail. What's more, it has a solid server network capable of handling speeds good enough for streaming in HD (providing your connection can handle it).

Get PrivateVPN Monthly Plan >>

Save 49% & get 3 extra months of ExpressVPN free with our exclusive offer

ExpressVPN Discount Coupon

ExpressVPN Discount Coupon Can I get a free VPN for the UK?

Yes, if you're careful! There are some free VPNs we'd recommend. However, these are really only suitable for providing privacy on a day-to-day browsing basis. They generally would not be able to handle more data-intensive tasks like streaming, gaming, or torrenting. This is because they're often intended to just give the customer a flavor of what a VPN is about and what one can do – all in the hopes that the user will enjoy it enough to upgrade to the premium service.

We recommend that you take advantage of VPN free trials or money-back guarantees. All the VPNs listed in this article have them, and they will allow you to try out the full service before committing to a lengthy contract.

What about the unlimited free VPNs I've seen?

This is where you need to be careful. We have been reviewing VPNs since 2013 and we can tell you we have never come across an unlimited free VPN that doesn't steal your bandwidth or data and sell it to third parties. As you can imagine, this is the exact opposite of what a VPN is meant to do.

The issues with UK based VPN services

There are a number of VPNs based in the UK. You may be wondering, "are UK based VPNs safe to use?", given the increasing government involvement in data collection.

To answer this question bluntly: no, not if you value your privacy.

The UK's heavy-handed surveillance laws dictate that ISPs, as well as VPNs, are required by law to log users' online activity and store it for 12 months. This means that if you use a UK-based VPN, it will be required to record what you do on its network and potentially hand it all over to authorities upon request.

UK-based VPNs should be avoided at all costs for this very reason. The good news is that a VPN doesn't have to be actually based in the UK to make it an excellent UK VPN. In the end, your best bet will be to sign on with one of the quality VPN providers we highlight in this guide here. These providers have been thoroughly researched and tested by our experts, and are all excellent at protecting user privacy while being based safely out of the jurisdiction of the overly-intrusive UK regulatory landscape.

Private Internet Access Discount Coupon

Private Internet Access Discount Coupon FAQs

Here are a few of the most common questions we get from our readers looking for a UK VPN. If you have additional questions, feel free to drop us a note in the comments section below!

Conclusion

Now that we have covered everything you need to know about choosing and using a VPN in the UK, let's review our top picks:

- ExpressVPN - The best VPN for the UK. ExpressVPN is fast and secure making it perfect for torrenting, streaming & gaming.

- NordVPN - A great VPN pick for the UK regardless of what device you use. NordVPN works great on all platforms, including iOS and Android.

- CyberGhost VPN - A UK VPN that is packed with value. You get 7 simultaneous connections and slick mobile apps for Android & iOS.

- Surfshark - A cheap VPN for the UK on our list. From $2.49 a month, you can unblock US Netflix and BBC iPlayer on all devices, including smart TVs.

- VyprVPN - A great all-round VPN choice for the UK. It has proprietary servers with fast speeds & improved levels of privacy.